MM'04, October 10-16, 2004, New York, New York, USA. 2004 1-58113-893-8/04/0010

Userradio

August Black

Media Arts and Technology Program

3431 South Hall

Santa Barbara, CA

14 July 2004

Abstract:

J.mComputer ApplicationsMiscellaneous

Design Performance Experimentation Theory

radio, broadcasting, streaming media, software, art

Introduction

In 1995, there were already intense activities of audio streaming on the net. Drawing from previous communication projects[1], early events initiated by the Kunstradio in Vienna[7,8], and later those by the Xchange network[16] sought to define strategies for converging available communications technologies. These new forms of ``broadcasting'' were polymorphic by nature, and in the best cases, aimed to provoke new kinds of participation in the event. Little by little, these events invented new ways to reformulate the monolithic one-to-many form of traditional broadcasting, and were either supplemented or replaced with a many-to-many or many-to-one topologies. Now, many years later, there is more acceptance and availability of streaming applications and technologies, allowing for more intricate mixtures to take place in both the kinds of tools that can be created and employed as well as the way in which ``radio'' events can be performed.[17,11,14,15,6]

The Userradio Application

Userradio is a mixture of communication tools for collaborative on-line audio production. With this application, an unlimited number of individuals can mix multiple channels of audio simultaneously and together from anywhere on-line using a standard flash-capable browser.

Like all internet communication tools, userradio consists of a server side of the application and a client side. In between is a database of sounds that can be accessed by both the clients and the server, where all sound files entered into the database reside on the server's hard disk. By default, permission to use the system, including the uploading of audio material into the database, is granted to anyone. However, it can also be limited to specified IP numbers.

The Client Front-end

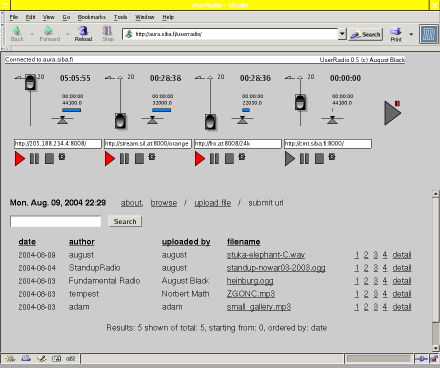

The users call the client application in their browsers, and the client automatically connects to the server. After a successful connection, the user can manipulate the graphical widgets in the client interface, where the movement and triggering of the various faders and buttons send control commands to the server. The control commands are formated in XML and require little bandwidth.

The client application is also listening for commands from the server. If any changes occur on the server side - a file has stopped playing, or another client requests a different file for playing - this is then reflected in the client's visual display. Because of this, each user can see all changes that take place in the collaborative mix. For example, if client A raises the volume fader on her userradio front-end, clients B, C, and D will see the volume fader for that channel rise. [See figure 1.]

Another important consideration in designing a client GUI for mixing live audio was how to use only one mouse to cross fade multiple audio channels. Normally, on a real mixing console you need two hands. For this, the userradio client has a special auto-fade control button to assign timed fade-in's and fade-out's to each channel. One sets a time for the fade and adjusts the fader control on the volume bar to the point where the fade should end. After that, a click on the auto-fade button will incrementally adjust the volume from it's current position to the end position.

The Server Back-end

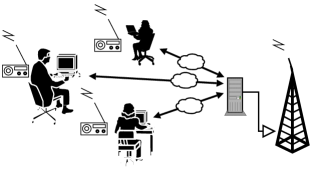

The server receives the commands for each audio channel, mixes the audio signals together and plays the mixed sound on it's sound card output which is then broadcast on terrestrial radio. Ideally, the ``users'' are within the broadcast diameter and listen on the radio.[See figure 2.] Additionally/alternatively, the server uses the shoutcast~ external[10] to stream an mp3 encoded signal to an icecast audio server[9]. Because the server and client are only exchanging control signals, the actual audio output on the radio is more or less instantaneous. In the case where audio is streamed on the net, latency will of course become an issue.

The server software consists of a Pure Data[13,12] audio engine and a java communications gateway. The java gateway relays incoming messages in either XML or OSC (Open Sound Control)[5] to the audio application. The audio engine plays and mixes the audio. It includes an external![[*]](pics/footnote.png) called readanysf~ [2] , that can play multiple file types from files on disk or streamed from the network. Because userradio streams it's audio output on the internet and it uses readanysf~

internally to read streams from the net, multiple userradio servers can be linked together to form an intricate network of migratory audio.

called readanysf~ [2] , that can play multiple file types from files on disk or streamed from the network. Because userradio streams it's audio output on the internet and it uses readanysf~

internally to read streams from the net, multiple userradio servers can be linked together to form an intricate network of migratory audio.

Examples

Userradio is intended mainly for real-time control of FM radio, where a mid- to low-grade computer with an audio output device can serve an analogue audio signal at a single location while simultaneously broadcasting a digital audio stream to multiple locations on a network. An example is the Fundamental Radio[4] show on Radio FRO in Linz, Austria where an hour of radio has been regularly produced simultaneously by two individuals, each in separate locations (their own apartments) away from the radio studio.

While userradio is built mostly as a live audio mixer, it could also be used to control audio via automation. Any kind of client interface could be made that can read/write TCP/IP or UDP sockets. A simple PHP or Python script could start, stop and fade separate channels based on a time-line or some other criteria.

Userradio has also been implemented for other means, such as the Radiotopia project at the Ars Electronica Festival 2002[3] where the output from the 4 channels of userradio were projected from the 4 separate sets of 160,000 Watt loudspeakers in the OMV Klangpark for a 4-channel outdoor audio environment. Here, the democratic nature of userradio was exposed as several users either fought for control or learned to take turns. Also, as sounds were uploaded to the database, the most favorable audio pieces were those that were less polished and could mix well with other sources.

Conclusions

While userradio allows for a considerable amount of online control without the necessity of having someone in the production studio to monitor the stream, it is not meant as a drop-in replacement for the hands-on immediacy of real equipment in the studio. It should simply allow for a different approach to production, where the militant regularity of radio schedules can be matched with an online production entity that is always ''on'', waiting to be triggered. Additionally, it should encourage a needed communal or representative character to the radio medium, where the space of production is spread amongst it's listeners.

Acknowledgments

The development of Userradio has kindly been supported by the Austrian Bundeskanzleramt and the US National Science Foundation's IGERT program in Interactive Digital Multimedia, Award#DGE-0221713.

Bibliography

-

- 1

-

R. Adrian.

Art and telecommunication,1979-1986: The pioneer years.

http://telematic.walkerart.org/overview/overview_adrian.html. - 2

-

A. Black.

readanysf~.

http://aug.ment.org/readanysf. - 3

-

A. Black, R. Huber, and N. Math.

Radiotopia open air.

http://www.aec.at/radiotopia. - 4

-

A. Black and M. Seidl.

Fundamental radio 1998-2003.

http://funda.ment.org. - 5

-

Center for New Music and Audio Technologies.

Osc.

http://cnmat.cnmat.berkeley.edu/OpenSoundControl. - 6

-

furtherfield.org.

Visitor's studio.

http://www.furtherstudio.org/live/. - 7

-

H. Grundmann.

Horizontal radio: A report.

http://kunstradio.at/HORRAD/horradisea.html, 1996. - 8

-

H. Grundmann.

But is it radio?

In R. Smits and R. Smits, editors, Acoustic Space 3. E-Lab & Xchange Network, 2000. - 9

-

Icecast Team.

Icecast.

http://icecast.org. - 10

-

O. Matthes.

shoutcast~

http://www.akustische-kunst.de/puredata/shout/index.html. - 11

-

J. Mayr, M. Schnell, O. Thuns, and J. Wunschik.

Streaps.

http://streaps.org. - 12

-

M. Puckette.

Pure data.

http://crca.ucsd.edu/~msp. - 13

-

M. Puckette.

Pure data.

In Proceedings, International Computer Music Conference, pages 269-272. International Computer Music Association, 1996. - 14

-

Radio Free Asia.

R-boss.

http://techweb.rfa.org. - 15

-

radioqualia.

Frequency clock.

http://www.frequencyclock.net/. - 16

-

various.

Xchange network.

http://xchange.re-lab.net. - 17

-

Waag Society for Old and New Media.

Keyworx.

http://www.keyworx.net/.

- ... external

![[*]](pics/footnote.png)

- An ``external'' is an extra plug-in for the pure data environment.

2004-08-09